Image credit: Zachary del Rosario

Image credit: Zachary del Rosario

Abstract

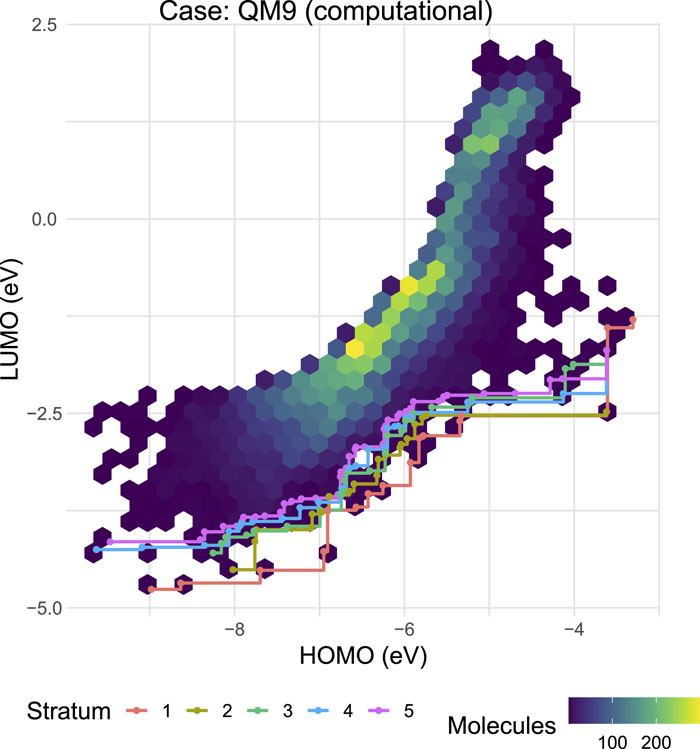

Discovering novel chemicals and materials can be greatly accelerated by iterative machine learning-informed proposal of candidates—active learning. However, standard global error metrics for model quality are not predictive of discovery performance and can be misleading. We introduce the notion of Pareto shell error to help judge the suitability of a model for proposing candidates. Furthermore, through synthetic cases, an experimental thermoelectric dataset and a computational organic molecule dataset, we probe the relation between acquisition function fidelity and active learning performance. Results suggest novel diagnostic tools, as well as new insights for the acquisition function design.

Type

Publication

The Journal of Chemical Physics